· career · 7 min read

Beyond Coding: The Importance of Ethical AI Knowledge in the OpenAI Interview

Learn why ethical AI knowledge matters in OpenAI interviews, how to demonstrate it effectively, and practical frameworks, sample answers, and questions to ask that show you understand responsible AI in engineering roles.

Outcome-first: by the end of this article you’ll know exactly how to show interviewers - clearly and persuasively - that you not only write great code, but you build responsible AI systems.

Why this matters now. Interviews for AI-focused roles increasingly evaluate candidates on ethics and safety as well as algorithms and systems. The world expects deployed models to be robust, fair, private, and maintainable. Companies like OpenAI specifically care about these dimensions because the cost of getting them wrong can be enormous: real-world harm, reputational damage, and legal exposure.

This article explains what interviewers are looking for, gives concrete frameworks you can use in answers, shares sample responses to common prompts, and lists practical ways to demonstrate ethical competence in live interviews and take-home tasks.

What interviewers are assessing (short list)

- Awareness: Do you know common ethical failure modes of ML systems (bias, privacy leaks, hallucinations, unsafe outputs)?

- Reasoning: Can you weigh trade-offs (accuracy vs. safety, latency vs. red-teaming, openness vs. misuse risk)?

- Practice: Do you propose concrete mitigations (logging, tests, human-in-the-loop, differential privacy)?

- Communication: Can you explain risks and decisions clearly to engineers, product managers, and non-technical stakeholders?

- Ownership: Will you document, monitor, and iterate on safety after deployment?

If you can confidently cover these five areas in examples and system-design answers, you will stand out.

A simple interview-ready ethics framework

Use this five-step sequence in answers. It’s concise and memorable.

- Identify - What specific harms or failure modes could the system produce?

- Assess - Who is affected? What is the likelihood and impact? Are some groups disproportionately affected?

- Mitigate - Concrete engineering or product controls (testing, filters, architecture changes).

- Validate - Metrics, red-team tests, user studies, simulation, disaggregated evaluation.

- Monitor & Document - Telemetry, incident response, model cards and runbooks.

Say the framework out loud when appropriate. It demonstrates structure and pragmatic thinking.

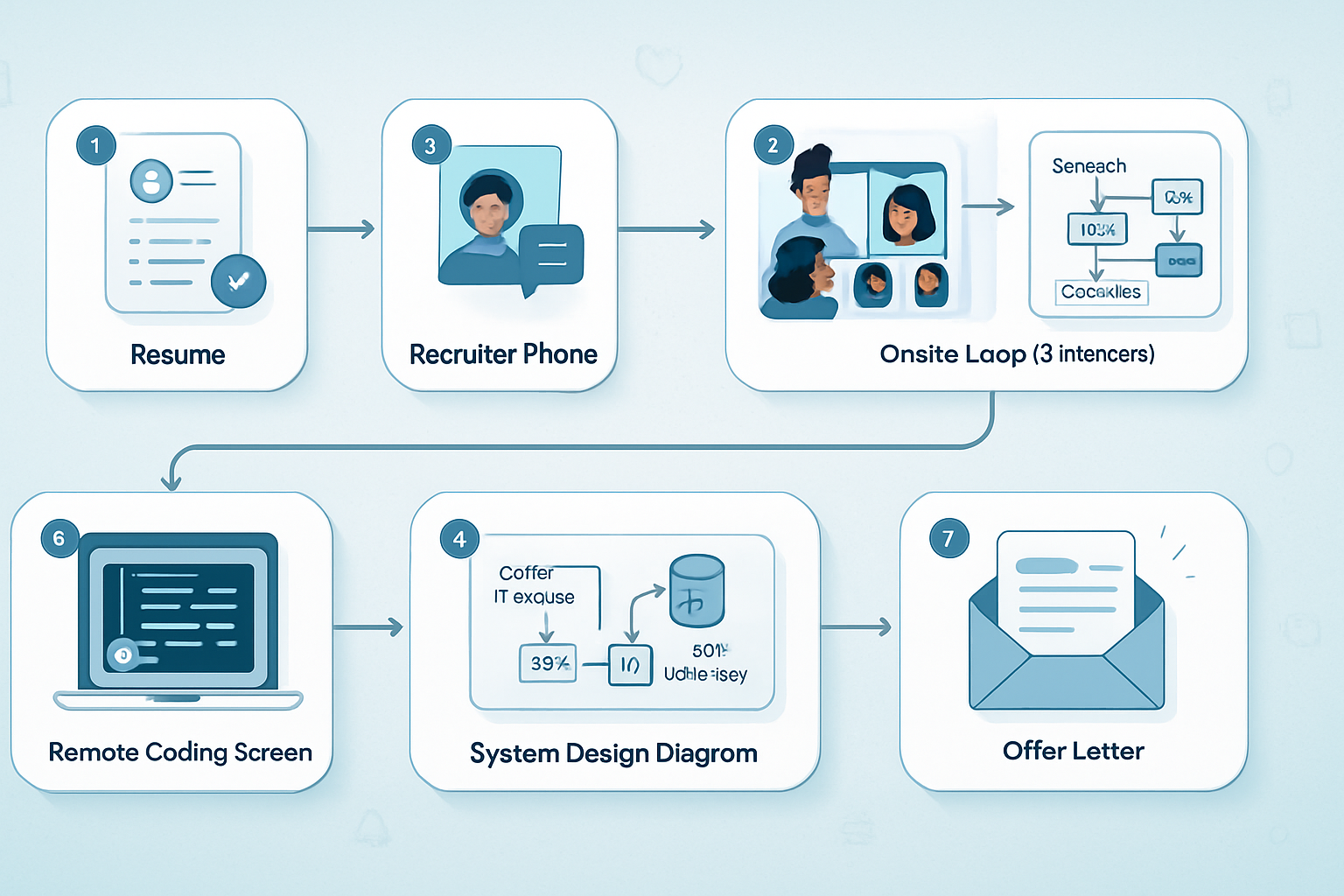

How to show ethics during different interview stages

Whiteboard/system-design: Explicitly sketch where harms can appear (data collection, preprocessing, model behavior, API surface, downstream uses). Use the five-step framework and include monitoring and rollback paths in the architecture.

Behavioral/onsite: Pick a concrete past example. Use the STAR format (Situation, Task, Action, Result) and emphasize the Assess->Mitigate->Validate steps plus the measurable outcome.

Take-home projects: Add tests and documentation. Provide a short “Ethics considerations” README section that lists edge cases, threat models, and how you’d instrument the system in production.

Live coding: When asked to implement something that interacts with user content, mention rate limits, content filters, logging for abuse detection, and opt-in consent flows if relevant.

Concrete talking points and phrasing (copy-and-paste friendly)

When asked “How do you think about ethical risks?”

Short: “I map risks across the ML lifecycle: data, model, and deployment, and then design mitigations that are measurable and auditable.”

Longer: “First I identify plausible harms and affected groups. Then I quantify where possible, choose mitigations that reduce harm with minimal utility loss, add monitoring and human-in-the-loop safeguards, and document decisions in model cards and runbooks.”

When asked “How would you reduce hallucinations?”

- “I’d combine training data improvements, prompt-engineering/conditioning, grounding the model in verified sources, and runtime checks. I’d add a confidence or verification stage that either refuses to answer or routes to a retrieval+verification path. And I’d instrument to measure hallucination rates on targeted datasets.”

When asked “How would you prevent model misuse?”

- “Define misuse threat models, limit high-risk capabilities by API policy, rate limits, and gating, and require additional controls (e.g., human review or business verification) for sensitive use-cases. Maintain robust abuse detection and a rapid response playbook.”

These answers are concise, structured, and show both technical and operational thinking.

Practical engineering measures to mention (technical depth)

- Data governance: provenance, labeling guidelines, bias audits, and selective data exclusions.

- Privacy: differential privacy, secure aggregation, encryption-in-transit and at-rest, and minimal data retention.

- Fairness testing: disaggregated metrics, subgroup performance checks, counterfactual and causal analyses.

- Robustness: adversarial testing, input sanitization, and domain generalization checks.

- Safety controls at inference: content filters, classifiers for high-risk outputs, rejection or clarification responses, and rate limits.

- Human oversight: escalation paths, human review thresholds, and hybrid workflows for edge cases.

- Observability: logs, alerts, dashboards for safety metrics, and post-deployment experiments.

- Documentation: model cards, datasheets for datasets, and runbooks for incidents.

Mentioning specific implementations - e.g., hooks in CI to run safety tests, or how to log inputs/outputs with privacy-preserving identifiers - shows that you think in terms of shipping software.

Example mini system-design: a public assistant API

Problem: design an API for a conversational assistant that can answer domain-specific legal and medical questions.

- Identify: misinformation/harm in medical/legal advice; privacy leaks; malicious use.

- Assess: high severity if incorrect; medical/legal sensitive groups.

- Mitigate (architecture):

- Require user intent and context; provide explicit “not a substitute for professional advice” messaging.

- Use retrieval from vetted sources and cite sources in responses.

- Gate sensitive endpoints: require partner verification or human review for high-risk queries.

- Implement an instrumentation layer to log flagged queries and model outputs in an auditable store.

- Validate: run red-team scenarios, measure incorrect advice rate on domain test suites, conduct clinician/lawyer reviews.

- Monitor & Document: deploy dashboards for safety signals, publish model cards and usage policies, and prepare incident response playbooks.

Saying this on a whiteboard - and sketching the flow from user -> retrieval -> model -> safety filter -> response -> logging -> human escalation - will reflect mature thinking.

Sample behavioral story (brief, STAR-ready)

Situation: We launched a recommendation feature and saw disparate performance for a user subgroup.

Task: I was responsible for diagnosing and fixing it.

Action: I ran disaggregated evaluation, traced bias to imbalanced labels, retrained with stratified sampling and added fairness constraints, then added monitoring to catch regressions.

Result: Group-level error dropped 35% and the fix preserved overall utility. We documented the changes and added the subgroup to our CI tests.

That’s concise, measurable, and shows ownership.

Questions you can ask interviewers (signals of depth)

- “How does the team measure and track model safety once a model is deployed?”

- “What kinds of adversarial testing or red-team exercises do you perform?”

- “How are ethical trade-offs weighed against product metrics like latency or engagement?”

- “What incident response or rollback playbooks exist for model failures?”

- “How does the team document model limitations for downstream engineers and users?”

These show you’re thinking beyond code and into operations and governance.

What to include in take-home projects

- A short ethics README: threat models, assumptions, and mitigations.

- Tests that simulate misuse or edge-case inputs.

- Simple dashboards or logs (even a CSV) demonstrating how you’d monitor safety metrics.

- A model card or short documentation explaining limitations and intended uses.

Hiring teams notice when candidates proactively document trade-offs.

Resources to cite in interviews and read before your interview

- OpenAI usage and safety information: https://openai.com/policies and https://openai.com/safety

- Model Cards (Mitchell et al.): https://arxiv.org/abs/1810.03993

- Datasheets for Datasets (Gebru et al.): https://arxiv.org/abs/1803.09010

- ACM Code of Ethics: https://www.acm.org/code-of-ethics

- Partnership on AI: https://partnershiponai.org/

Referencing one or two of these during an interview shows you’ve done your homework.

Final checklist to practice before the interview

- Prepare 2–3 behavioral examples where you considered ethics in design or production.

- Memorize the five-step framework and practice applying it to common problems (privacy, bias, hallucinations, misuse).

- Add an ethics README to any take-home exercise and include at least one test or metric for safety.

- Have 3 intelligent questions to ask the interviewer about monitoring, incident response, and governance.

- Be ready to trade off: practice explaining why you’d accept a small performance loss to avoid a large safety risk.

Ethics is not a separate exercise. It’s part of shipping reliable, responsible systems.

Careers at AI companies reward engineers who can combine code, product judgment, and ethical foresight. If you can show that you think about harms, measure them, and design repeatable mitigations, you’ll move from being “just another coder” to being a trusted builder.

Further reading

- OpenAI safety and policy pages: https://openai.com/policies and https://openai.com/safety

- Mitchell et al., Model Cards: https://arxiv.org/abs/1810.03993

- Gebru et al., Datasheets for Datasets: https://arxiv.org/abs/1803.09010

- ACM Code of Ethics: https://www.acm.org/code-of-ethics